Today, we are super excited to formally announce the alpha-release of ggsql . As the name suggests, ggsql is an implementation of the grammar of graphics based on the SQL syntax, bringing rich, structured visualization support to SQL for the first time.

In this post we will go over some of the motivations that lead us to develop this tool, as well as give you ample examples of its use; so you can hopefully get as excited about it as we are (if you can’t wait to get excited, jump straight to the examples ).

Why ggsql?#

Writing a new visualization library from scratch is always a big task and you may wonder why we have decided on this endavour. Some of the reasons are:

- We want to engage with and help data analysts and data scientists that predominantly work in SQL

- SQL and the grammar of graphics just fits together extremely well

- We want to create an extremely powerful, code-based, visualization tool that doesn’t require a runtime

- We have learned so much after 18 years of ggplot2 development and maintenance that it feels right to apply it to a blank canvas

Let’s discuss each in turn.

Hello, SQL user!#

While first R and then Python captured all the attention of the data science revolution, SQL chucked along as the reliant and powerful workhorse beneath it all. We recognize that there are large portions of people that work with data that do so only or predominantly in SQL. The choice they have for visualizing their data are often suboptimal in our view:

- Export the data and use R or Python which may not be within their comfort zone

- Use a GUI-based BI tool with poor support for reproducibility

- Rely on one of the few tools that exist for creating visualizations directly within the query that we feel are not powerful or ergonomic enough

Our goal when designing ggsql was that the syntax should immediately make sense to SQL users, tapping into their expectation of composable, declarative clauses.

Apart from offering a better way to visualize their data, ggsql is also a way to invite SQL users into our rich ecosystem of code based report generation and sharing build on top of Quarto .

Declarative wrangling, declarative visualization#

If you are reading this with no prior knowledge of SQL, here’s a very brief recap: SQL is a domain specific language for manipulating relational data stored in one or more tables. The syntax is based on the concept of relational algebra which is a structured way to think about data manipulation operations. The semantics defines a set of modular operations that are declarative rather than functional, allowing the user to compose very powerful and custom manipulations using a well-defined set of operations.

If you are reading this with no prior knowledge of the grammar of graphics, here’s a very brief recap: The grammar of graphics is a theoretical deconstruction of the concepts of data visualization into its modular parts. While purely theoretical, tools such as ggplot2 have implemented the idea in practice. The semantics defines a set of modular operations that are declarative rather than functional, allowing the user to compose very powerful and custom visualizations using a well-defined set of operations.

From the above, slightly hyperbolic, overview it is clear that both SQL and the grammar of graphics have a lot of commonality in their approach to their respective domains. Together they can offer a very powerful and natural solution to the full pipeline from raw data to final visualization.

No runtime, no problem#

Why does it matter that ggplot2 and plotnine requires R and Python installed respectively? There are clear benefits to a single, focused executable to handle data visualization:

- Embedding a small executable in other tools is much easier than bundling R/Python (or requiring them to be installed)

- Clear scope and need makes it easier to sandbox and prevent malicious code execution (either deliberately or in error)

Both of the above points make ggsql a much more compelling option for integrating into tools such as AI agents assisting you in data analysis, or code based reporting tools that may execute code in different environments.

You may think we have had to swallow some bitter pills by moving away from an interpreted language, but it has also given us a lot. Most importantly, the rigid structure means that we can execute the whole data pipeline as a single SQL query per layer on the backend. This means that if you want to create a bar plot of 10 billion transactions you only ever fetch the count values for each bar from your data warehouse, not the 10 billion rows of data. The same is true for more complicated layer types such as boxplots and density plots. This is in stark contrast to the situation in most visualization tools that first materializes the data (potentially choking on the size), then perform the necessary computations on it, before proceeding to plot it.

We are now wise beyond our years#

18 years of ggplot2 development and maintenance also means 18 years of thinking about data visualization syntax, use, and design. I am not a boastful person but I do believe that gives us some expert knowledge on the subject matter. However, not all of this knowledge can be poured back into ggplot2. There are decisions and expectations established many years ago that we have to honor, or at least only challenge very gradually (which we do on occasions).

ggsql is a blank slate. Not only in the sense that we are building it from the ground up, but also in that it is built for an environment with no established expectations for a visualization tool. I cannot stress how liberating and invigorating this has felt, and I am positive that this shines through in how ggsql feels to the user.

Meet ggsql#

That was a lot of words about the why. Hopefully you come away from it all with a sense of this whole thing being justified, ready to learn more about it. If so, read on!

A (simple) example#

Let’s start with some code even though we haven’t introduced the syntax yet. I promise we will go through it below. The example is an adaptation of a visualization created by Jack Davison for TidyTuesday.

WITH astronauts AS (

SELECT * FROM 'astronauts.parquet'

QUALIFY ROW_NUMBER() OVER (

PARTITION BY name

ORDER BY mission_number DESC

) = 1

)

SELECT

*,

year_of_selection - year_of_birth AS age,

'Age at selection' AS category

FROM astronauts

UNION ALL

SELECT

*,

year_of_mission - year_of_birth AS age,

'Age at mission' AS category

FROM astronauts

VISUALIZE age AS x, category AS fill

DRAW histogram

SETTING binwidth => 1, position => 'identity'

PLACE rule

SETTING x => [34, 44], linetype => 'dotted'

PLACE text

SETTING

x => [34, 44, 60],

y => [66, 49, 20],

label => [

'Mean age at selection = 34',

'Mean age at mission = 44',

'John Glenn was 77\non his last mission -\nthe oldest person to\ntravel in space!'

],

hjust => 'left',

vjust => 'top',

offset => [10, 0]

SCALE fill TO accent

LABEL

title => 'How old are astronauts on their most recent mission?',

subtitle => 'Age of astronauts when they were selected and when they were sent on their mission',

x => 'Age of astronaut (years)',

fill => nullThat was a lot of code, but on the flip-side it allows us to cover a lot of the most important aspects of the syntax with one example.

At the topmost level there are two parts to an SQL query: The SQL query, and the visualization query. The SQL query is anything from the beginning to the VISUALIZE clause. It is your standard SQL, and it accepts anything your backend accepts (in this blog post we use a DuckDB backend). The result of the query is funnelled directly into the visualization rather than being returned as a table like you’d normally expect. It should be noted that the SQL query part is optional. If your data is already in the right shape for plotting you can skip it and instead name the source directly in the VISUALIZE clause:

VISUALIZE year_of_selection AS x, year_of_mission AS y FROM 'astronauts.parquet'Since the point of this post is not to teach you SQL we won’t spend much more time discussing the SQL query part. The main take away is that everything before the VISUALIZE clause is pure SQL, any resulting table is automatically used by your visualization, and any table or CTE created there is available for referencing in the visualization query.

Now, let’s look at the new part — everything from VISUALIZE and onwards. VISUALIZE marks the end of the SQL query and the beginning of the visualization query (or VISUALISE for those who prefer UK spelling). It can stand on its own or, as we do here, have one or more mappings which will become defaults for every subsequent layer. Mappings are purely for relating data to abstract visual properties. A mapping is like a SELECT where you alias columns to a visual properties (called aesthetics in the grammar of graphics). In the visualization above we say that the age column holds the values used for x (position along the x axis) and the category column holds the values used for fill (the fill color of the entity). We do not say anything about how to draw it yet.

Following the VISUALIZE query we have a DRAW clause. DRAW is how we add layers to our visualization. There is a large selection of different layer types in ggsql. Some are straightforward: e.g. point for drawing a scatterplot. Some are more involved: histogram (which we use here) requires calculation of derived statistics like binned count. A visualization can have any number of layers and layers will be rendered in the sequence they are defined. DRAW has a sibling clause called PLACE. It is used for annotation and works like DRAW except it doesn’t get data from a table but rather as provided literal values. It follows that our visualization above contains three layers: A histogram layer showing data from our table, a rule annotation layer showing precomputed mean values for each category, and a text annotation layer adding context to the visualization. It is worth mentioning that a layer does not correspond to a single graphical entity. Like with the text layer above, each layer can render multiple separate entities of its type so there is no need to have e.g. 3 line layers to render line plots for 3 different categories.

After the DRAW and PLACE clauses we have a SCALE clause. This clause controls how data values are translated into values that are meaningful for the aesthetic. In our case, the category column holds the strings “Age at mission” and “Age at selection” which doesn’t in itself translate to a color value. The clause SCALE fill TO accent tells ggsql to use the “accent” color palette when converting the values mapped to fill to actual colors. Scales can be used for much more, like applying transformations to continuous data, defining break points, and setting specific scale types (like ordinal or binned).

The last clause in our visual query is LABEL which allows us to add or modify various text labels like title, subtitle, and axis and legend titles.

Stepping back#

That was a mouthful. But there are two very silvery linings to it all:

- You now know the most important aspects of the syntax (there are more, of course, but you can grow into that)

- Many visualization queries will be much simpler than the one above

To support the last claim, let’s make a boxplot of astronaut birth year split by sex:

VISUALIZE sex AS x, year_of_birth AS y FROM 'astronauts.parquet'

DRAW boxplotThat’s much shorter but still, if you are coming from a different plotting system you may even think this is overly verbose (e.g. compared to something like boxplot(astronauts.sex, astronauts.year_of_birth)). Yes, it is longer, but it is also more structured, composable, and self-descriptive. These features (which are a direct result of its grammar of graphics lineage) means that both you and your future LLM coding buddy will have an easier time internalizing the workings of all types of plots that can be made. The 18 years of dominance of ggplot2 (which shares these features) in the R ecosystem is a testament to this.

As an example, let’s change the above plot to instead show the same relationship as a jittered scatterplot.

VISUALIZE sex AS x, year_of_birth AS y FROM 'astronauts.parquet'

DRAW point

SETTING position => 'jitter'Or perhaps the jitter follows the distribution of the data so it doubles as a violin plot:

VISUALIZE sex AS x, year_of_birth AS y FROM 'astronauts.parquet'

DRAW point

SETTING position => 'jitter', distribution => 'density'As you can see the syntax and composable nature makes visualization iteration very ergonomic, something that is extremely valuable in both explorative analyses and visualization design.

Behind the curtains#

With a passing understanding of how to use ggsql, let’s discuss what goes on behind the scenes. At its core ggsql is a modular Rust library that is split into a Reader module, a Plot module, and a Writer module. Both the Reader and Writer can be swapped out, leaving the Plot module as the only constant.

The Reader#

This module is responsible for communicating with your data backend, i.e. your SQL database. While this makes it sound like you need to have a complicated database setup in order to use ggsql, this is not the case. While ggsql ships with an ODBC and Snowflake reader, the default reader is DuckDB . DuckDB allows you to ingest e.g. parquet and csv files directly (no installation necessary) as we have done above, as well as talk to remote SQL databases through its rich (extension ecosystem)[https://duckdb.org/docs/current/core_extensions/overview].

The Plot#

The Reader module communicates with the Plot module in two ways. It tells the Plot module about it’s particular flavor of SQL and allows it to execute queries on it, returning the resulting data. On the other hand, the Plot module is responsible for reading the visualize query and parse it into a plot representation that can be handed off to the Writer. Part of this is to construct a single query for each layer in the visualization (each DRAW query) that returns the data needed for that layer. All computations necessary for the layer is part of this query and are thus handled by the backend. For instance, the query for the boxplot layer includes logic for calculating quantiles etc, all happening in the database without the need for materializing the raw data.

The Writer#

This module is responsible for the output. It gets the structured representation of the visualization from the Plot module and converts it into the actual visual representation of it. Currently we only have a Vegalite writer but we are planning to expand this very soon to shed our reliance on Vegalite idioms.

Tooling#

More important than how it works underneath is perhaps how you use it. Recognizing that people may wish to use it in different ways we provide ggsql in a number of different distributions:

Jupyter kernel#

As part of the standard installation we provide a ggsql Jupyter kernel. This allows you to use ggsql directly within Jupyter notebooks and Quarto documents, both for executing SQL and ggsql queries. It maintains a persistent connection throughout the document so that visual queries can reference tables and views created in earlier blocks.

CLI#

The second part of the standard installation is a command line tool for executing queries from the command line, either by providing the query in a file or directly in the call. It also provides parsing and validation tools.

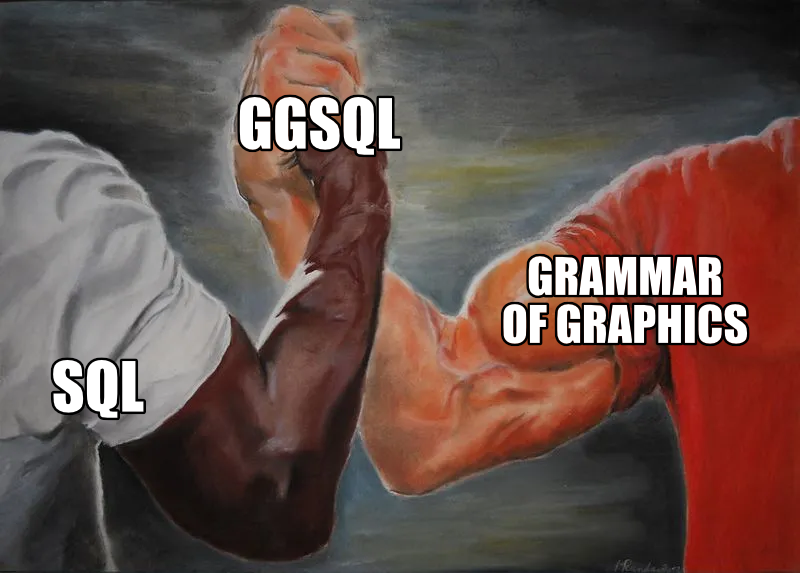

VS Code + Positron extension#

The ggsql VS Code extension provides a tailored ggsql experience for Visual Studio Code and in particular for Positron (see screenshot below). In Positron you will get a ggsql console where you can write and execute queries, with the result appearing in the plot pane along with your R and Python plots. You also get support for sending commands from a ggsql script to the console for execution, like you may know from R and Python. Lastly, in Positron we interface with the Connection pane allowing you to hook up ggsql to any database you have there and immediately begin visualizing the data in your data warehouse.

Python package#

The ggsql package , available on PyPI, provides direct bindings to the Rust library, allowing you to register data, send queries, and visualize the result.

R package#

The ggsql package, soon to be available on CRAN, is an R sibling to the python package, providing the same level of direct bindings as the Python package does. In addition it provides a knitr engine that RMarkdown and Quarto can use which provides bidirectional data access between R, Python, and ggsql code blocks.

WASM binary#

ggsql compiles to WASM meaning that it can run in the browser. You can see this in effect at the ggsql website where all code blocks are editable with instant updates of the visualization below. The wasm binary will be available on NPM in the near future.

The future#

We are nearing the end of a rather long announcement — thanks for sticking with us. In the very first line we called this an alpha-release which implies that we are not done yet. To get you as excited about the future as you hopefully are about the present state of ggsql, here is a non-exhaustive list of things we want to add.

- New high-performance writer, written from the ground up in Rust

- Theming infrastructure

- Interactivity

- End-to-end deployment flow from Posit Workbench/Positron to Connect

- Fully fledged ggsql language server and code formatter

- Support for spatial data

What does this mean for ggplot2 development#

If you are a current ggplot2 user you may have read this with a mix of fear and excitement (or maybe just one of them). Does this mean that we are leaving ggplot2 behind at Posit to focus on our new shiny toy? Not at all! ggplot2 is very mature and stable at this point but we will continue to support and build it out. We also hope that ggsql can pay back all the experience from ggplot2 that went into its development by informing new features in ggplot2.

Want more?#

If you can’t wait to learn more about ggsql and begin to use it you can head to the Getting started section of the ggsql website for installation instructions, and a tutorial, or head straight to the documentation to discover everything ggsql is capable of. We can’t wait for you to try it out and hear about your experiences with it.